For more background read this post and this one.

Last weekend we hosted the second Aberdeen Air Quality hack weekend in recent months. Coming out it there are a number of tasks which we need to work on next. While some of these fall to the community to deliver, there are also significant opportunities for us to work with partners.

The Website

While the Air Aberdeen website is better, we still need to apply the styling that was created at the weekend.

Humidity Measurement

We’ve established that the DHT022 chips which we use in the standard Luftdaten device model have challenges in working in our maritime climate. They get saturated and stop reporting meaningful values. There is a fix which is to use BME380 chips in their place. These will continue to give humidity and temperature readings, plus pressure, but due to the different technology used will handle the humidity better. Knowing local humidity is important (see weather data below). So, we need to adapt the design of all new devices to use these chips, and retrofit the existing devices with the new chips.

Placement of new devices

We launched in February with a target of 50 sensors by the end of June and 100 by the end of the year. So far attendees have built 55 devices of which 34 are currently, or have recently been, live. That leaves 21 in people’s hands that are still to be registered and turned on. We’re offering help to those hosts to make them live.

Further, with the generous sponsorship of Converged, Codify, and now IFB we will shortly build 30 more devices, and that will take us to a total of 85. We’ve had an approach by a local company who may be able to sponsor another 40. So, it looks like we will soon exceed the 100 target. Where do we locate these new ones? We need to have a plan to strategically place those around the city where they would be most useful which is where the map, above, comes in.

Community plus council?

We really want to work with the local authority on several aspects of the project. It’s not them versus us. We all gain by working together. There are several areas that we could collaborate on, in addition to the strategic placement of future devices.

For example, we’ve been in discussions with the local authority’s education service with a view to siting a box on every one of the 60 schools in the city. That would take us to about 185 devices – far in excess of the target. Doing that needs funding, and while the technology challenge to get them on the network is trivial, ensuring that the devices survive on the exterior of the buildings might be a challenge.

Also, we’ve asked but had no response to our request to co-locate one of our devices on a roadside monitoring station which would allow us to check the correlation between the outputs of the two. We need to pursue that again.

Comparing our data suggests that we can more than fill in gaps in the local council’s data. The map of the central part of Aberdeen in the image above, shows all of the six official sensors (green) and 12 of the 24 community sensors that we have in the city (in red). You can also see great gaps where there are no sensors which again shows the need for strategic placement of the new ones.

We’ve calculated that with a hundred sensors we’d have 84,096,000 data observations per year for the city, all as open data. The local authority, with six sensors each publishing three items of data hourly, have 157,680 readings per annum – which is 0.18% of the community readings (and if we reach 185 devices then ACC’s data is about 0.10% or 1/1000th of the community data) and the latter of course, besides being properly open-licensed, has much greater granularity and geographic spread.

Weather data

We need to ensure that we gather historic and new weather data and use that to check if adjustments are needed to PM values. Given that the one-person team who was going to work on this at CTC16 disappeared, we need to first set up that weather data gathering, then apply some algorithms to adjust the data when needed, then make that data available.

Engagement with Academia

We need to get the two local universities aboard, particularly on the data science work. We have some academics and post-grads who attend our events, but how do we get the data used in classes and projects? How do we attract more students to work with us? And , again we need to get schools to only hosting the devices but the pupils using the data to understand their local environment?

The cool stuff

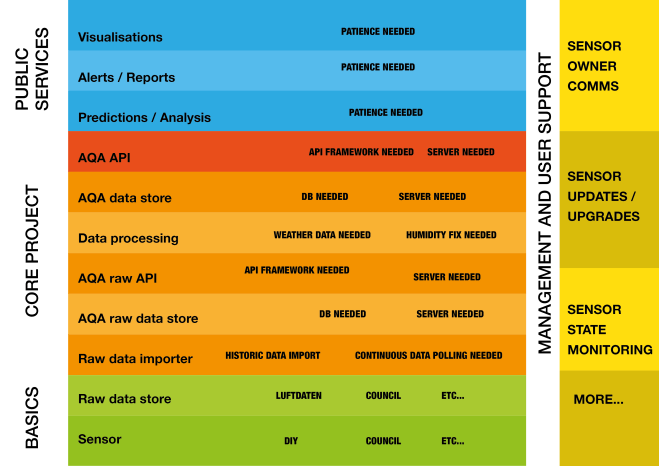

Finally, we when we have the data collected, cleaned, and curated, and APIs in place (from the green up through orange to red layers below) we can start to build some cool things (the blue layers).

These might include, but are not limited to:

- data science-driven predictive models of forecast AQ in local areas,

- public health alerts,

- mobile apps to guide you where it is safe to walk, cycle, jog or suggest cleaner routes to school for children,

- logging AQ over time and measuring changes,

- correlating local AQ with admissions to hospital of cases of COPD and other health conditions

- inform debate and the formulation of local government strategy and policy.

As we saw at CTC16, we could also provide the basis for people to innovate using the data. One great example was the hacked LED table-top lamp which changes colour depending on the AQ outside. Others want to develop personalised dashboards.

The possibilities, as they say, are endless.